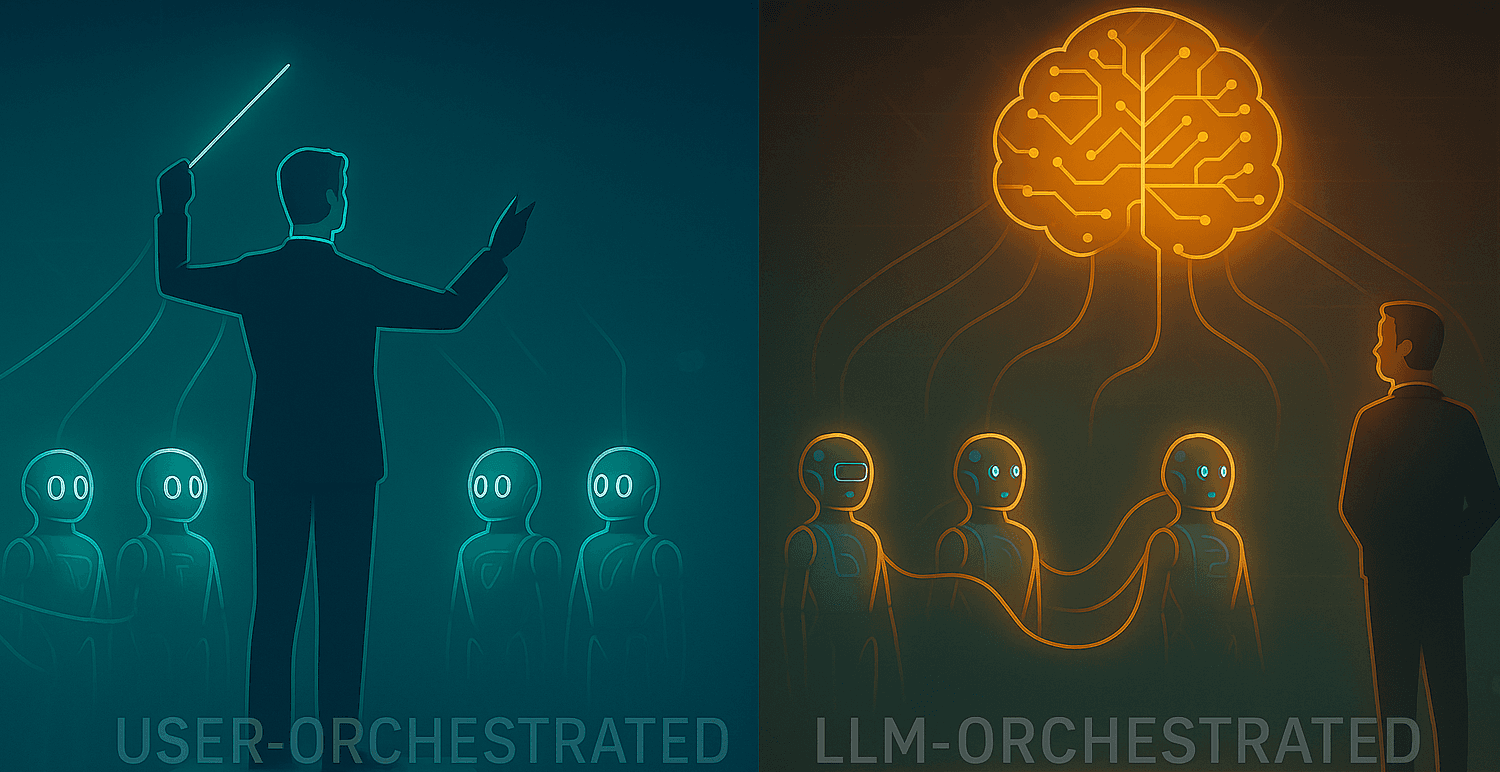

Would you let the LLM take the wheel?

A practical, friendly guide to User-Orchestrated vs LLM-Orchestrated agent workflows

Agents are a bit like actors on a stage. Somebody — or something — has to call “Action!”, cue the right lines, and decide when the show ends. In modern AI architectures that somebody can be the human developer, or the LLM itself. That choice — who orchestrates — shapes reliability, transparency, cost, and the kinds of problems your system can solve (or create 😀).

This blog explains the two orchestration patterns, shows how they behave using two simple projects I built (user-orchestrated vs LLM-orchestrated flows), walks through what the LLM is “thinking” when it calls other agents, and gives clear, real-world guidance on when to pick each pattern. Through this blog it becomes apparent, why absolute autonomy is not always good.

Two orchestration patterns (the short version)

1) User-Orchestrated Flow — the human (or program) is the conductor.

You explicitly call agents in a fixed sequence: “Call A, then B, then C.” Great when you need deterministic, auditable pipelines. Think compliance reports, billing, or anything where skipping a step is unacceptable.

2) LLM-Orchestrated Flow — the LLM is the conductor. An agent has tools (which can be plain functions or other agents) and dynamically decides which to call and when. This shines for adaptive, exploratory tasks — troubleshooting, creative ideation, diagnostics — where the correct flow depends on content, context, and nuance.

How these patterns look in practice

I built two OpenAI Agents SDK projects using Python to explore the two different ways of orchestrating the flow.

The project is a trivial and simple one, but helps understand the concept - there are 3 sales agent each with a different personlity type (Humorous / Serious / Professional). These agents generate sales email draft. A fourth agent takes the 3 drafts as inputs, and chooses the single best draft and sends the email.

Project A: User-Orchestrated flow

The Python code calls three sales agent functions in parallel (Humorous / Serious / Professional).

The host script collects their outputs and passes them into a Picker agent.

The Picker outputs a selection and

function_call; your code callssend_email

Properties

Flow is explicit, predictable, and easy to audit.

The orchestration logic sits in code (not the LLM).

Traces show a linear, developer-specified sequence of LLM calls.

Project B: LLM-Orchestrated flow

The Picker Agent is given the three sales agents as tools (using the OpenAI Agent

agent.as_tool()API) .You call the Picker once. Inside its reasoning, it decides when and which sales agents to call, evaluates outputs, and decides whether to call

send_email.Traces show nested and dynamic LLM calls: the Picker’s call includes sub-calls to the sales agents and the final

send_emailinvocation.

Properties

Orchestration is emergent, decided in the model’s chain-of-thought.

Less host-side boilerplate; more autonomy for the agent.

Difficult to reason about because of agent’s autonomy.

Inspecting OpenAI traces

User orchestrated

In the user-orchestrated version, every step of the workflow is explicitly controlled by the user (or a top-level script). Each sales agent is called independently, their results collected by the user, and finally, the user invokes the picker agent. You can see in the trace that all three sales agents complete their calls separately — the LLMs do not interact with each other — and the picker agent is invoked only after the user collects their outputs.

This pattern offers predictability and transparency, making it easier to debug and monitor, but it also requires the user (or a coordinating system) to handle sequencing and logic manually.

LLM orchestrated

Here, the Picker Agent takes over as the orchestrator. Notice how it calls each specialized Sales Agent as a tool — visible in the nested traces — and then makes a final call to send_email. The Picker Agent’s LLM decides which sub-agents to invoke, when to stop, and how to rank the results, all without explicit user direction.

This pattern demonstrates Agent-as-Tool composition: agents invoking other agents as tools. It’s more autonomous and adaptable, allowing the LLM to coordinate reasoning across multiple roles, though at the cost of more complexity and potential latency (as shown by the longer overall runtime).

When is one better than the other?

Example 1 : Financial compliance reporting

Better choice: User orchestrated flow

Why: legal/regulatory contexts require deterministic control, stepwise validation, and auditable intermediate outputs. Let humans define exactly what happens, in which order, and when approvals are required. An LLM improvising or skipping checks is unacceptable.

Pattern: User orchestrates; the LLMs are specialized tools that cannot change the pipeline.

Key priorities: audit logs, checkpoints for human review, deterministic ordering, strict input validation.

Example 2 : Customer technical troubleshooting

Better choice: LLM orchestrated flow

Why: troubleshooting is branching, context-dependent, and hard to hardcode. The LLM can decide whether to analyze logs, query docs, run a diagnostic, or escalate — in real time. This reduces brittle flow charts and yields a more natural customer experience.

Pattern: LLM orchestrates; sub-agents (log parser, KB search, diagnostics) are provided as tools/sub-agents. The LLM composes them adaptively.

Key priorities: adaptivity, concise context passing, capable sub-agents, careful rate limits & cost controls.

Final thought

When I built the LLM-orchestrated version of my workflow, I expected it to choose the single best email and send it. Instead, it sent all three.

The developer instinct took me down the debugging path for a moment. Then it hit me — the model wasn’t following broken logic; it was following its own reasoning. It had concluded, in its own way, that sending all options might be a better outcome. The more autonomy we give LLMs, the more their behavior reflects interpretation rather than execution.

Traditional software runs instructions. LLMs interpret intent. And intent can be ambiguous. You can refine prompts, add guardrails, and constrain tools — and still get different outcomes on different runs. That’s not always a flaw; it’s the nature of probabilistic reasoning.

In a user-orchestrated flow, control lives outside the model. The human decides which agents to call and when — ensuring predictability at the cost of flexibility.

In a LLM-orchestrated flow, control moves inside the model. The agent itself decides how to sequence steps, which tools to invoke, and when to stop. It can adapt, but it can also surprise you.

This unpredictability raises a question that every AI engineer eventually faces:

How much control are we willing to hand over to systems that can reason?

As developers, we’re used to determinism — same input, same output. But LLMs don’t think in binaries. They weigh, interpret, and improvise. Their behavior isn’t guaranteed; it’s guided. And as we start using them as orchestrators, we’re not just designing workflows — we’re designing boundaries for reasoning.

The LLM sending three emails instead of one wasn’t a failure of orchestration. It was a reminder that autonomy and predictability live in tension. Our job, as builders of agentic systems, is not to eliminate that tension — but to engineer responsibly within it. Carefully choose the pattern that best serves your use case. And in some cases, you might even combine both — creating controlled autonomy that lets creativity flow within the boundaries you define.

So — would you let the LLM take the wheel?

For further reading and learning

Learn AI agents with Ed Donner

Anthropic’s blog on effective agents

Building AI Agents with Anthropic’s Barry Zhang , Erik Schluntz (YouTube video)