Data Virtualisation: Reshaping How Enterprises Access Data

Data has always been the lifeblood of enterprise decision-making. But for decades, getting that data to the right person, at the right time, in the right format has been a costly, fragile, and frustrating endeavour. Extract, transform, load. Wait. Repeat. While ETL pipelines quietly chugged away in the background, businesses were left looking at yesterday's data trying to make tomorrow's decisions.

Data virtualisation challenges that status quo — fundamentally. And the industry is finally paying attention.

1. History of Data Virtualisation: The Journey Until Now

The story of data virtualisation didn’t begin with a grand architectural vision. It began the way most enterprise innovations do — with a pile of problems and a growing sense of “there has to be a better way.”

The 1990s: The Federated Query Era

In the early 1990s, as relational databases proliferated across enterprises, the need to query across multiple systems simultaneously became apparent. IBM's federated database technology, built into DB2, was one of the earliest attempts at this. You could write a single SQL query that would fan out to multiple databases and bring the results back together. It was clunky, limited, and slow — but the seed of an idea had been planted.

Early 2000s: Enterprise Information Integration (EII)

By the early 2000s, computing power had grown significantly, and vendors began positioning federated query capabilities as a broader category: Enterprise Information Integration, or EII. The term was first coined by MetaMatrix and represented a fundamental shift — rather than physically moving data, you would create a virtual layer that made disparate sources look like a single, unified database. Denodo released its very first version (v1.0) in 2002, built from the ground up around this concept.

The appeal was obvious: no data replication, no staging tables, no brittle ETL pipelines. Just a logical layer that abstracted the complexity underneath.

2005–2015: The Rise of the Data Warehouse — and the Cracks

During the same period, the data warehouse was at its peak. Teradata, Netezza, and SQL Server became the backbone of enterprise analytics. But warehouses had a problem: they required everything to be moved, transformed, and loaded before it could be queried. As the volume and variety of data exploded — driven by digital transformation, mobile, and the early SaaS wave — the ETL bottleneck became impossible to ignore.

Hadoop arrived around 2008 as a response to the scale problem. But Hadoop was complex, opaque, and slow for interactive analytics. The result? Enterprises ended up with a patchwork: warehouses, data lakes, operational databases, and dozens of SaaS platforms — all siloed, all requiring their own integration pipelines. This fragmentation didn't just create a data problem — it created an industry. ETL tools, integration middleware, and data pipeline vendors collectively grew into a multi-billion dollar category built almost entirely around the cost of moving data that probably shouldn't have needed moving.

This fragmentation created exactly the conditions data virtualisation was built for.

2015–2020: Data Virtualisation Goes Mainstream

As cloud computing matured and the microservices revolution dispersed data even further across organisations, virtualisation platforms like Denodo, TIBCO Data Virtualization, and IBM Data Virtualisation began gaining serious enterprise traction. They were no longer niche EII tools — they were positioned as semantic layers and data fabric enablers, providing unified access, governance, lineage, and security across the entire data estate.

The concept of the Data Fabric — which Gartner began evangelising heavily from 2019 onwards — placed data virtualisation at its architectural heart.

2020–Present: The Lakehouse Era and a New Question

The emergence of the lakehouse (Databricks, Snowflake, Apache Iceberg) and distributed SQL engines (Trino, Starburst, DuckDB) triggered a new architectural reassessment. Suddenly, you had yet another powerful physical data store, yet another query engine — and organisations were asking: do we still need virtualisation?

The answer, as we will explore, is nuanced. But what is clear is that data virtualisation has evolved from a niche integration tool into a foundational enterprise architecture pattern. The journey has taken it from clunky federated SQL in the 1990s to a sophisticated, AI-ready logical data management layer in 2026.

2. What Is Data Virtualisation?

At its core, data virtualisation is deceptively simple: it creates a unified, virtual layer over disparate data sources — without physically moving or replicating the data.

Think of it like a universal remote control. Your TV, sound system, streaming box, and gaming console all have their own interfaces and protocols. A universal remote doesn't replace any of them — it sits on top and lets you control everything through one interface. Data virtualisation does the same for your data sources.

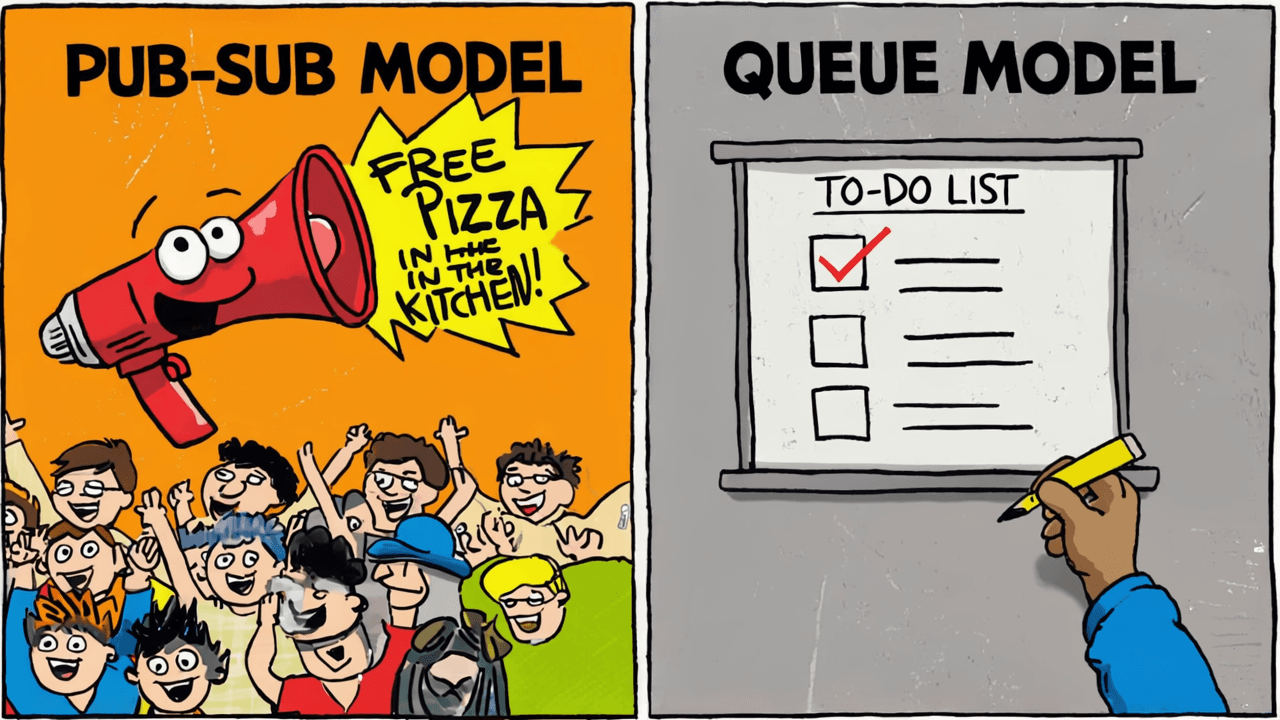

When a business user or application sends a query to a data virtualisation platform, the platform:

Intercepts the query at the virtual layer

Translates it into the native language of each relevant source (SQL for databases, REST calls for APIs, SPARQL for graphs, etc.)

Executes the query in parallel across sources using pushdown optimisation

Federates the results back and presents a unified response

The result? A user in a BI tool sees a clean, business-friendly view of "Customer 360" — without needing to know that the data lives across Salesforce, SAP, an Oracle database, and three REST APIs.

What makes modern data virtualisation different from old federated query?

Modern platforms go far beyond simple query federation. They include:

Semantic modelling — business-friendly naming, calculated metrics, and reusable logical entities

Row- and column-level security — fine-grained access control enforced at the virtual layer

Intelligent caching — frequently accessed results are materialised in-memory or on fast storage, so not every query hits the source

Data lineage and cataloguing — full visibility into where data comes from and how it flows

REST and GraphQL APIs — so data isn't just queryable via SQL but also accessible to applications and AI agents

Active metadata — using AI and ML to automatically suggest optimisations, detect anomalies, and enrich the semantic layer

Data virtualisation is not anti-ETL. It's an alternative integration pattern — one that favours real-time, governed, logical access over physical data movement.

3. Industry Trends and the Importance of Data Virtualisation

The market numbers tell a compelling story.

The global data virtualisation market is projected to at a compound annual growth rate (CAGR) of over 20%, potentially reaching USD 22.83 billion by 2032. North America currently holds the largest market share, while Asia Pacific is the fastest-growing region.

But beyond the numbers, four structural forces are making data virtualisation increasingly important:

1. The Proliferation of SaaS and Cloud

The average enterprise now uses hundreds of SaaS applications — Salesforce, Workday, ServiceNow, NetSuite, HubSpot. Each of these is a silo. ETL pipelines into a central warehouse were workable when you had 10 systems; they become a maintenance nightmare at 200. Data virtualisation provides a live, unified access layer across SaaS estates without the replication overhead.

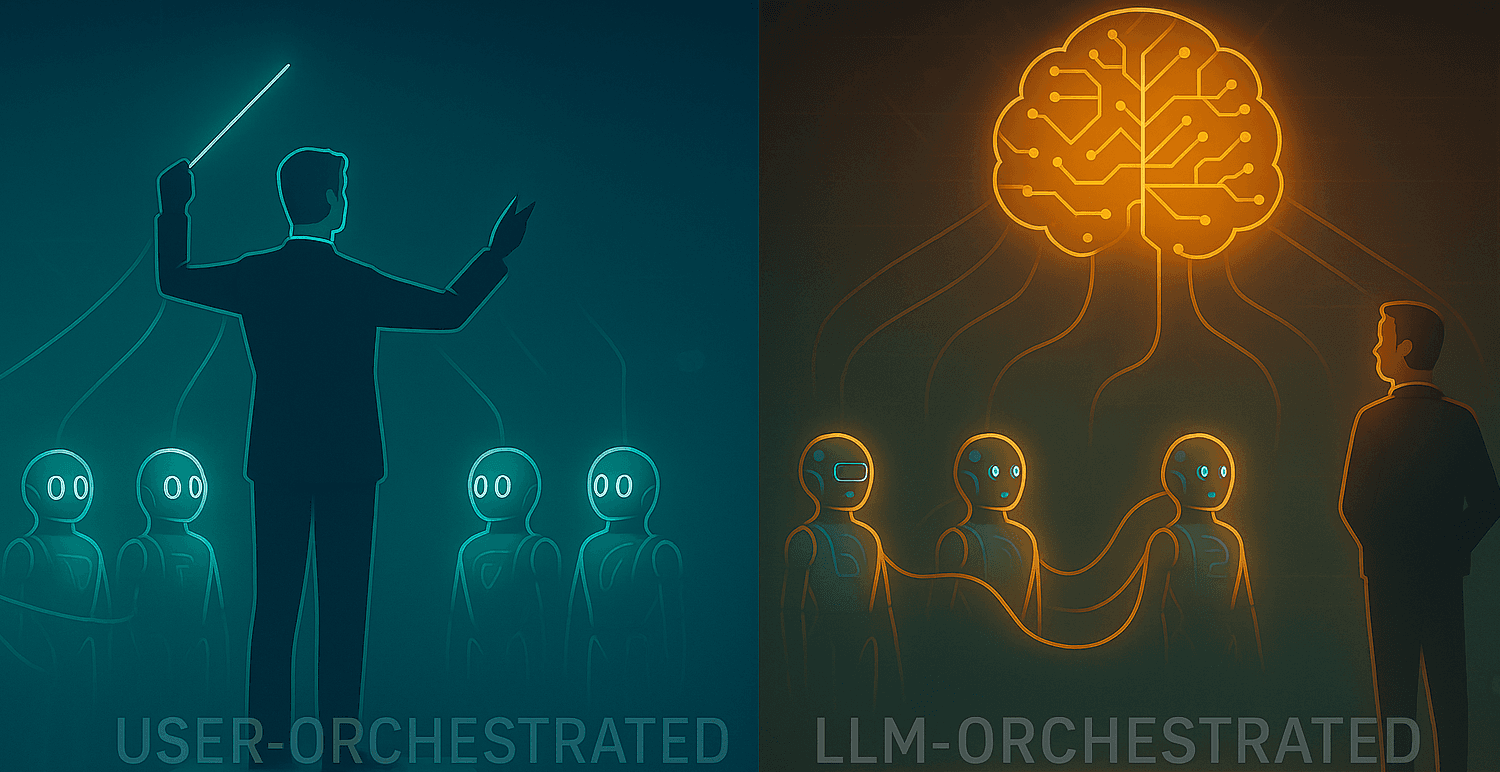

2. The Rise of AI and Agentic Architectures

AI agents are increasingly being used to query data in real time for reasoning and decision-making — not batch-processing it for training. This use case plays directly to virtualisation's strengths: fresh, governed, real-time data access without stale warehouse snapshots. As agentic AI matures, virtualisation-first architectures become even more attractive.

3. Data Mesh and Distributed Ownership

The data mesh paradigm — where data ownership is distributed to domain teams — creates a governance challenge: how do you maintain a unified consumer experience when data is produced and owned by many different teams? Data virtualisation provides the answer: a federated semantic layer that abstracts the distributed reality while presenting a coherent interface to consumers.

4. Regulatory and Data Sovereignty Pressures

Regulations like GDPR, CCPA, and emerging AI governance frameworks require organisations to know exactly where sensitive data lives and who can access it. Data virtualisation, with its centralised policy enforcement and lineage capabilities, makes compliance significantly more tractable than managing it across dozens of physical copies of data.

4. Data Virtualisation and ETL — Why You Almost Certainly Need Both

Let me be direct about something that a lot of vendor marketing conveniently glosses over: data virtualisation alone is not sufficient for complex enterprise analytics workloads. I know that's not the headline anyone building a Denodo pitch deck wants to read, but it is the truth — and understanding why is actually what makes you position the right solution for your environment.

The question is not "virtualisation or ETL?" The question is "which jobs belong to which tool?" And once you see the distinction clearly, the answer becomes obvious.

Where ETL and ELT remain non-negotiable

There is a category of analytical work where the output needs to exist as a persistent, pre-computed artefact — and no amount of clever query federation gets you out of that. Specifically:

Aggregations and enriched business views. Consider a SUM(revenue) GROUP BY region, product, week across five billion transaction rows, being queried by 300 concurrent BI users. You cannot push that computation back to the source system at query time — you will simply bring it down. The aggregate needs to be materialised somewhere physical. Data virtualisation can serve that result beautifully, but ETL produced it. The two are not competing here — they are sequential.

More importantly: enriched business views are business assets. When your data team has spent weeks joining customer records with transaction history, applying lifetime value formulas, and building a canonical "Customer 360" that finance, marketing, and operations all agree on — that view should be materialised and governed as a first-class artefact. It is not a query you want to recompute from scratch every time someone opens a dashboard.

Windowing and ranking functions at scale. LAG(), LEAD(), RANK() OVER(PARTITION BY ...), rolling 30-day averages — these require the engine to hold a full ordered partition in memory to compute correctly. Virtualisation engines can execute these, but they do so by pulling raw data across the wire first. At scale, pre-computing these via ETL in a lakehouse and serving the results via virtualisation is the sensible architecture.

Historical depth. Source systems purge or archive data. Your operational CRM does not store seven years of customer interactions. Your OLTP database does not retain every version of every record. A data warehouse or lakehouse is often the only place where that history lives in a queryable form — and virtualisation cannot conjure data that does not exist at the source.

ML and AI model training. Training a machine learning model requires repeated full scans over terabytes of data, shuffled and batched, co-located with compute. This is architecturally incompatible with query-time federation. You need the data physically present. Full stop.

Data quality, deduplication, and entity resolution. Identifying that "Akash Jain", "A. Jain", and "AJ" in three different systems are the same person is computationally expensive and produces a corrected record that needs to persist. You cannot fuzzy-match your way to a golden customer record on the fly at query time — at least not without making your users regret opening the dashboard.

So where does data virtualisation genuinely excel?

Virtualisation is the right answer — often the best answer — for:

Operational and real-time reporting where data freshness matters more than query performance

Self-service federated queries by analysts exploring data before it has been formally modelled

Regulatory and audit reporting that requires raw records from source systems with full lineage

Governed API-driven data products exposed to applications and AI agents

The semantic and governance layer that sits above your lakehouse, enforcing business definitions and access controls across everything

The architecture that actually works

In any enterprise with real analytical complexity, the honest architecture looks like this:

Data virtualisation does not replace the enrichment step — it sits above it, providing a governed, unified interface to the outputs of that enrichment. In this model, the lakehouse does not disappear — but it becomes a derived performance tier, not the source of truth. The virtualisation layer holds the semantic model, the governance rules, and the business logic that makes data actually mean something.

The right principle: virtualise first to explore and to serve; materialise when the workload demands it or when the business view is worth preserving as a managed asset.

For simpler deployments — a mid-size organisation, mostly SaaS data sources, modest query volumes, no ML ambitions — virtualisation alone may be genuinely sufficient. But if your analytics environment involves enrichment, windowing, high concurrency, or anything a data scientist would call "interesting", you need ETL in the stack.

5. Why Data Virtualisation?

Beyond the technical arguments, there are compelling business and operational reasons to invest in data virtualisation.

Speed to insight

Traditional ETL pipelines can take weeks or months to build for a new data source. Data virtualisation can connect a new source and expose it through the semantic layer in hours. For business teams waiting on new data to make decisions, this is transformational.

Reduced data redundancy and storage costs

Every physical copy of data has a cost — storage, compute, maintenance, and the hidden cost of keeping it synchronised. Virtualisation eliminates unnecessary copies, reducing storage overhead and the operational burden of keeping multiple systems in sync.

Single source of truth for business definitions

One of the most insidious problems in large organisations is metric inconsistency — the sales team's definition of "revenue" differs from finance's, which differs from the CFO dashboard's. Data virtualisation enforces a single semantic layer where business metrics, entities, and hierarchies are defined once and consumed everywhere.

Accelerating data democratisation

By abstracting the technical complexity of source systems, virtualisation allows data teams to publish clean, governed, business-friendly data products that non-technical users can access directly in their BI tools, notebooks, or AI assistants — without requiring SQL expertise or knowledge of underlying schemas.

Supporting data fabric and mesh architectures

Data virtualisation is the connective tissue of the modern data fabric. It enables the federated governance model that data mesh requires, providing centralised policy enforcement without centralised data storage.

Resilience and agility

When a source system is migrated, upgraded, or replaced, a virtualisation layer acts as an abstraction barrier — consumers of data don't need to change their queries or reports. Only the underlying connector is updated. This architectural resilience can save significant rework costs during technology transitions.

6. Anti-Pattern: Using Your Virtualisation Layer as a Compute Engine

This is one of the most expensive mistakes in enterprise data virtualisation deployments — and it's surprisingly common. The scenario looks like this. A team sets up a data virtualisation platform over a data lake, connects it to their BI tools, and starts building views. Business users begin running reports. Everything works. Then the bills arrive. What's actually happening under the hood is this: every time a dashboard loads or a report refreshes, the virtualisation layer is reaching into the raw data lake, scanning billions of rows, and computing aggregations — GROUP BY region, SUM(revenue), COUNT(DISTINCT customer_id) — from scratch. Every. Single. Time. The same computation, repeated on every query, for every user, on every refresh cycle. This is not what a virtualisation layer is for. It is a semantic and governance layer — designed to serve pre-structured data assets efficiently, not to replace the transformation layer that should have built those assets in the first place.

Virtualisation platforms are typically licensed or priced on data volume processed, query concurrency, or compute units consumed. When every query is scanning raw tables and computing aggregations on the fly, all of those metrics spike — often dramatically. You end up paying virtualisation-layer rates for work that should have been done once, stored, and served cheaply. The deeper irony is that this pattern hurts twice. The underlying data lake absorbs heavy compute load at query time instead of during scheduled batch processing. And the virtualisation platform consumes capacity doing work it was never designed to do repeatedly. Neither system is being used correctly, and you are paying for both inefficiencies simultaneously.

7. The Leaders in the Space: Gartner Says ...

Gartner evaluates the data virtualisation landscape primarily through its Magic Quadrant for Data Integration Tools — reflecting the reality that virtualisation has become deeply integrated with the broader data integration market rather than existing as a standalone category.

The December 2024 edition of the report evaluated 20 vendors, and the Leaders quadrant tells a revealing story about where the market has matured.

Denodo — the most specialised data virtualisation vendor in the quadrant — was recognised as a Leader for the fifth consecutive year. Denodo's strength lies in its depth: enterprise-grade semantic modelling, fine-grained security, extensive connector library, and a strong data fabric story. It remains the benchmark against which all other virtualisation capabilities are measured.

IBM — a stalwart of enterprise data integration — has been named a Leader for an extraordinary 20 consecutive years (as of 2025). IBM's DataStage and Cloud Pak for Data suite incorporate strong virtualisation capabilities, particularly valued by organisations already deep in the IBM ecosystem.

Microsoft was recognised as a Leader in the 2024 report, driven by Microsoft Fabric's unified analytics platform which incorporates virtualisation through its OneLake architecture and cross-source query capabilities.

TIBCO (now part of the Cloud Software Group) has historically been a strong player in this space with TIBCO Data Virtualization, serving large enterprises with complex integration requirements.

Beyond the Magic Quadrant, several newer entrants are reshaping the landscape:

Starburst (built on Trino) is becoming a popular open-source-based alternative for organisations wanting distributed SQL federation without a proprietary platform

Dremio positions itself as a lakehouse-native virtualisation and acceleration layer

Databricks Unity Catalog is increasingly incorporating data sharing and virtualisation capabilities natively within the lakehouse context

What Gartner's research consistently highlights is that the distinction between "data virtualisation" and "data integration" is dissolving. The future belongs to platforms that do both — enabling logical access and physical integration as context demands, governed by a unified semantic and metadata layer.

8. Conclusion: The Architecture Is Finally Catching Up to the Problem

For years, enterprise data architecture was built around a simple assumption: to use data, you must first move it. That assumption created an entire industry — ETL tools, data warehouses, replication pipelines — and a generation of data engineers whose primary job was plumbing.

Data virtualisation challenges that assumption at its foundation. It says: what if you didn't have to move the data? What if the semantic layer was the architecture?

The honest answer is that virtualisation alone cannot do everything. For complex enterprises — the ones dealing with enriched business views, high-concurrency dashboards, windowed aggregations, and ML workloads — ETL remains load-bearing, not legacy. The goal is not to replace it but to be precise about which layer does which job. Virtualise what needs to be fresh and flexible. Materialise what needs to be fast, enriched, and preserved.

But for the majority of query patterns — operational reporting, data product APIs, governed self-service analytics, and real-time AI agent queries — virtualisation is not just viable. It is often the better choice.

The market is converging on a hybrid model: virtualisation as the authoritative semantic and governance layer, physical storage as a derived performance tier. In this model, the lakehouse doesn't disappear — it becomes a cache you could rebuild at any time. The virtualisation layer becomes the thing you couldn't rebuild: the accumulated business logic, governance rules, and semantic definitions that make data actually mean something.

As agentic AI matures, as data mesh adoption grows, and as enterprises continue to accumulate SaaS sprawl, the case for virtualisation-first architecture will only strengthen.

If this resonated with you, I'd love to hear your thoughts — especially if you're working through data virtualisation decisions in your own organisation. Drop a comment or connect with me on LinkedIn.

Sources referenced in this article: