Graph RAG: Beyond Vector Search in Retrieval-Augmented Generation

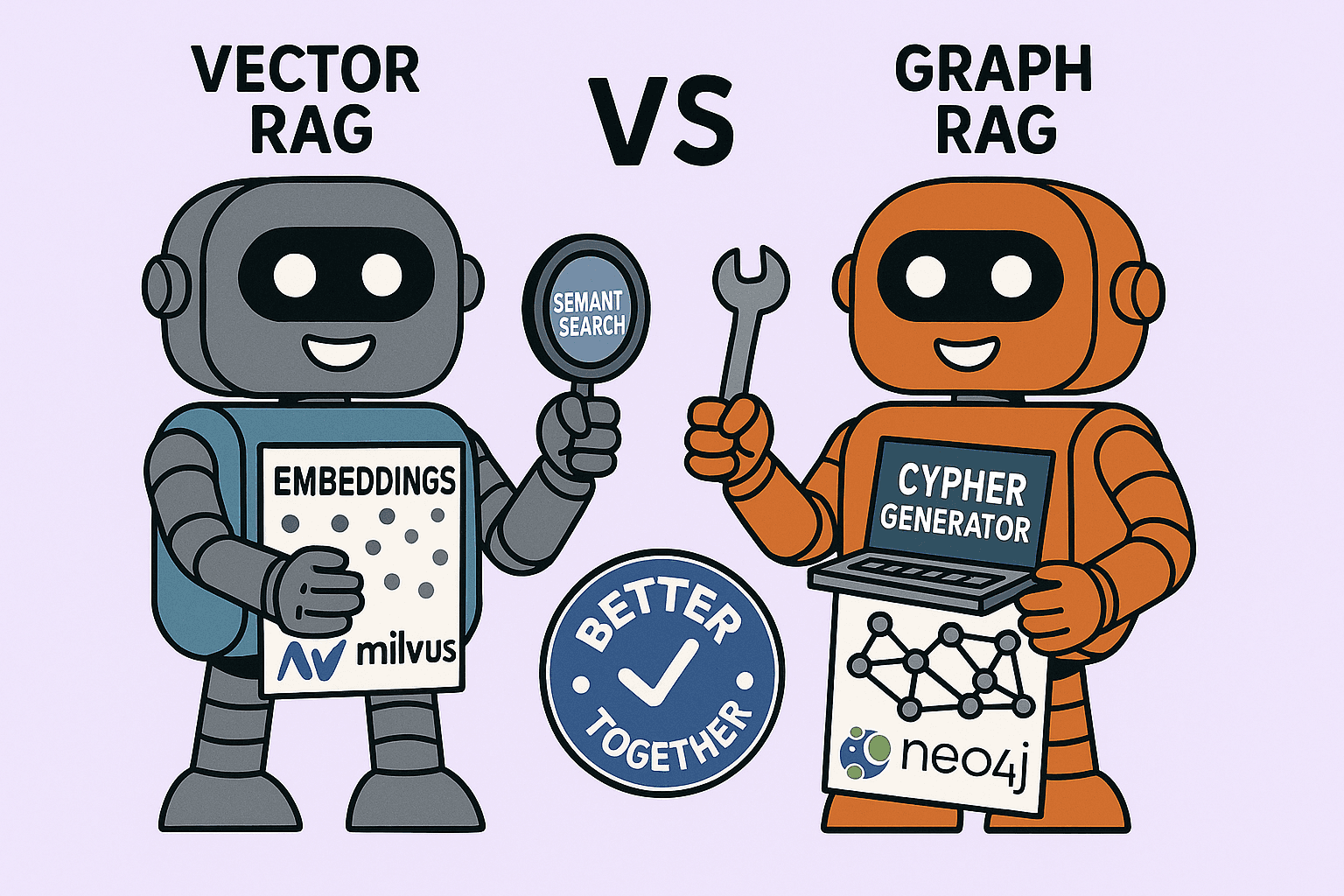

A hands-on comparison of Graph RAG and Vector RAG, their trade-offs, and the power of hybrid approaches.

Retrieval-Augmented Generation (RAG) has quickly become one of the most powerful techniques for grounding large language models (LLMs). Instead of expecting the model to “know everything,” we feed it relevant external knowledge retrieved at query time.

Most implementations use Vector RAG: documents are chunked, embedded, stored in a vector database, and retrieved by similarity. This works well for many questions, especially factoid queries.

But what happens when your question is about relationships instead of just keywords or themes?

That’s where Graph RAG comes in. And when you combine the two, you get the best of both worlds.

About my Application

To explore this space, I built a small application that lets me ask natural language questions about movies, actors, directors, genres, countries, and languages, and then compare how Vector RAG and Graph RAG answer them.

Frontend: A simple React app with a query box.

Backend components:

Neo4j → Knowledge graph of movies, actors, directors, genres, languages, and countries.

Milvus → Vector database for semantic similarity search over unstructured movie descriptions and metadata.

Ollama → Local LLM that turns query results into natural-language answers.

The app shows juxtaposed responses from Graph RAG and Vector RAG, including execution time, confidence, and retrieved context. This setup makes it easy to see strengths, weaknesses, and why a hybrid RAG system often makes the most sense.

How the Flows Differ

Here’s what happens under the hood when you ask a question.

🔹 Graph RAG Flow

User Query

↓

LLM Call 1 → Extract entities & relations

↓

LLM Call 2 → Generate Cypher query

↓

Neo4j (Graph Query Execution)

↓

LLM Call 3 → Synthesize final response

↓

Answer

🔹 Vector RAG Flow

User Query

↓

Generate Query Embedding

↓

Vector Database (Milvus) Search

↓

LLM Call → Synthesize final response

↓

Answer

👉 Graph RAG requires multiple LLM calls but produces highly precise, explainable results.

👉 Vector RAG is faster and fuzzier (relatively speaking), great for semantic overlap but weaker at multi-hop reasoning.

Real Examples

Complex Queries: Graph vs Vector RAG

Query: “Movies where Amy Irving and Matthew McConaughey have acted together in the same movie.”

Graph RAG → 🎬 Thirteen Conversations About One Thing (2001) — correct.

Vector RAG → 🎬 U-571 and 🎬 Contact — both McConaughey films, but no Amy Irving.

Takeaway: Vector retrieval is about semantic similarity. Graph retrieval is about explicit relationships.

Simple Queries: Graph vs Vector RAG

Query: “Who directed Kabhi Alvida Naa Kehna?”

Both approaches return: Karan Johar ✅

Graph RAG: Precise, but involves multiple hops (entities → Cypher → execution → synthesis).

Vector RAG: Cheaper, faster, and good enough for factoid queries.

Takeaway:

For simple factoid queries, Vector RAG can match Graph RAG’s accuracy at a lower cost and latency.

For complex relational queries, Graph RAG shines.

Where Graph RAG Shines

Structured reasoning: Multi-hop queries like “actors who worked with Christopher Nolan and Steven Spielberg.”

High precision: Prevents hallucinations by relying on explicit graph edges.

Complex domains: Movies, medicine, finance, or supply chains where relationships matter.

Where Graph RAG Fails

Fuzziness: Queries like “movies about space exploration” may miss results if not encoded explicitly in the graph.

Cold start: If the graph doesn’t contain the fact, nothing is retrieved.

High upfront effort: Building and maintaining the graph schema and ingestion pipeline takes work. In my application I had to design a graph schema first and then load it in neo4j. With vector, I simply uploaded the text to Milvus chunked by movie, not much effort.

Cold Start in Graph vs Vector RAG

The cold start problem affects both approaches:

Graph RAG: If the node/relationship isn’t ingested, queries return nothing.

Vector RAG: If a document isn’t embedded and indexed, similarity search won’t find it.

The difference is in end user experience:

Graph’s missing data is immediately obvious.

Vector’s missing data feels less harsh because embeddings “cover” broader text.

This again makes the case for hybrid RAG: vectors for coverage, graphs for precision.

Latency: Graph vs Vector RAG

Graph RAG: Multiple LLM calls → more accurate, but slower.

Vector RAG: Single LLM call after retrieval → faster, especially for factoid queries.

Latency grows with query complexity:

Graph adds reasoning steps.

Vector mostly waits on the LLM’s answer.

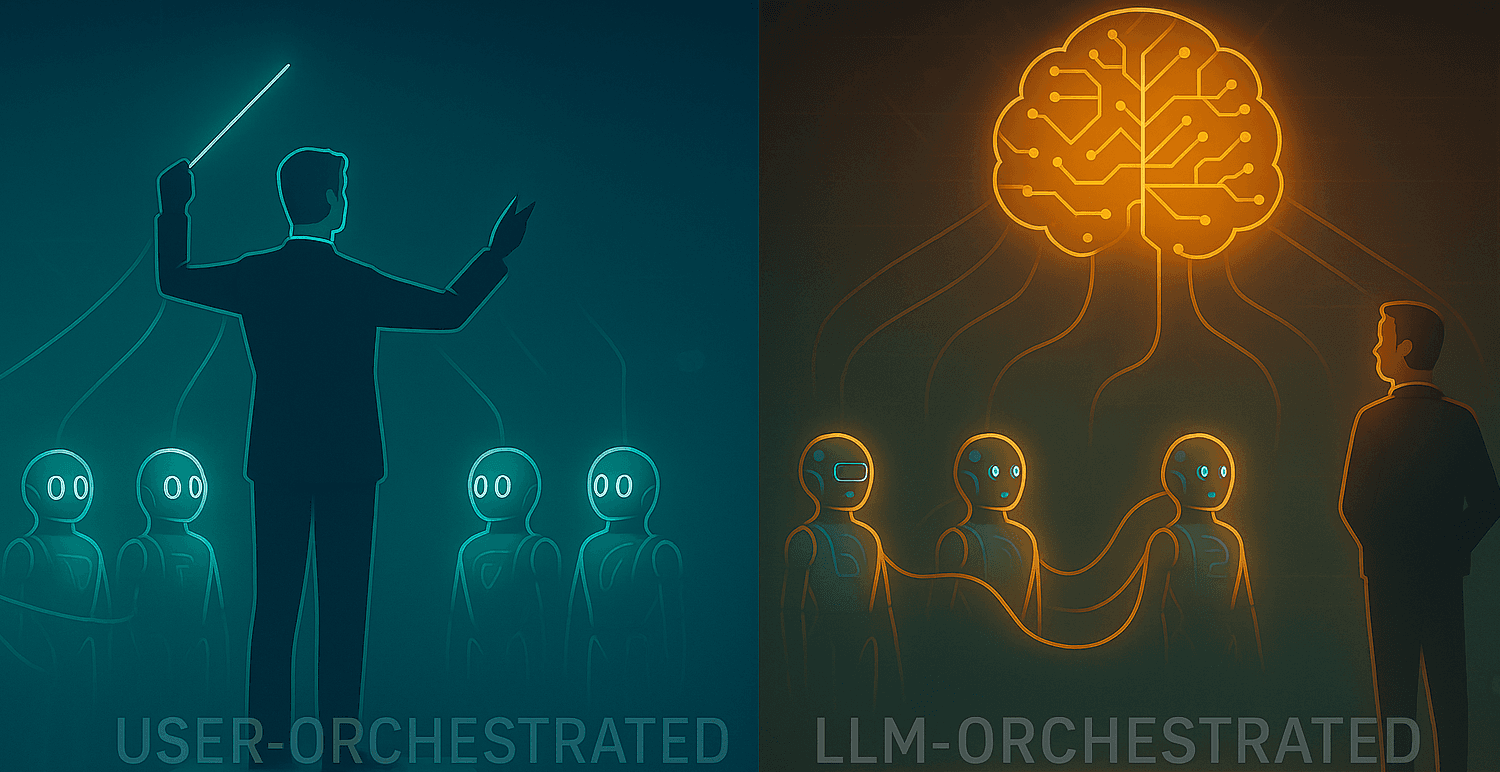

Hybrid RAG: Best of Both Worlds

A good RAG application doesn’t pick one — it orchestrates both.

Smart Routing Flow

LLM Classifies Query

Factoid/simple → Vector RAG.

Relational/multi-hop → Graph RAG.

Dynamic Invocation of the right retriever.

Fallback Flow: If uncertain, try Vector first (fast, fuzzy), then Graph for relational verification.

User Query

↓

LLM Call → Classify Query Type

↓

┌─────────────┬─────────────┐

│ Factoid │ Relational │

│ → Vector RAG│ → Graph RAG │

└─────────────┴─────────────┘

↓

If uncertain → Fallback: Vector → Graph

↓

Final Answer

Case Study: The “Bahubali” Query

Query: “Which other movies has the director of Bahubali directed?”

Graph RAG alone → Fails because the graph database has the following “Bahubali: The Beginning” or “Bahubali 2: The Conclusion”. It Requires exact match (“Bahubali: The Beginning”).

Vector RAG alone → Finds the right movie via similarity, but weak on relational precision.

Hybrid solution:

Vector Retrieval → Finds candidate titles (Bahubali: The Beginning, Bahubali 2: The Conclusion).

Query Augmentation → Rewrite: “Which other movies has the director of Bahubali: The Beginning directed?”

Graph Query → Fetches the precise Director node.

Response Synthesis → “Bahubali: The Beginning was directed by S. S. Rajamouli.”

✅ Vector fixes fuzziness. Graph ensures correctness.

Side-by-Side Comparison

| Aspect | Graph RAG | Vector RAG |

| Cold start | Needs explicit nodes/relationships | Needs embedding; no index = no result |

| Fuzziness | Weak (exact matches) | Strong (semantic similarity) |

| Schema effort | High (domain modeling) | Low (just embed text) |

| Explainability | Very high (explicit edges) | Lower (similarity scores) |

| Complex reasoning | Strong (multi-hop queries) | Weak |

| Scalability | Can be expensive on dense graphs | Scales with embeddings |

| Coverage | Limited to modeled facts | Broad, unstructured text |

Conclusion

Graph RAG is not a replacement for vector search — it’s a complement.

When relationships matter → Graph RAG wins.

When themes or fuzzy matching matter → Vector RAG wins.

When you want both coverage and precision → Hybrid RAG is the future.

Ultimately, the exact flow depends on your context and goals:

Do you need the highest accuracy, even if it’s slower?

Or do you need fast, fuzzy answers with acceptable trade-offs?

A well-designed hybrid RAG system lets you optimize for both — while keeping users in the loop with transparency about what’s happening behind the scenes.